kentoh - Fotolia

How Kubernetes enhances DevOps practices

Kubernetes isn't necessary for DevOps, and you don't need a DevOps team to adopt Kubernetes container management. But, here are all the ways that these two are better together.

Using Kubernetes in a DevOps toolchain enables distributed orchestration to manage infrastructure and configurations consistently across multiple environments.

Automated application deployment and scaling through container management adds operational resiliency to the speed of DevOps pipelines. This benefit is most apparent for organizations that run multiple platforms, such as on-premises and public cloud. Deployment practices would vary from one to another without a technology like Kubernetes.

Here's a look at how using Kubernetes benefits the DevOps toolchain, from improved automation and scalability to advanced deployment techniques. But be aware of the risks, such as its complexity and the current shortage of personnel who understand it.

The benefits Kubernetes brings to DevOps

An Agile framework is foundational to most organizations' DevOps delivery cycle. Kubernetes for distributed orchestration fits well into Agile practices. Kubernetes is a service-oriented architecture and an object-oriented framework, which makes it able to support Agile principles.

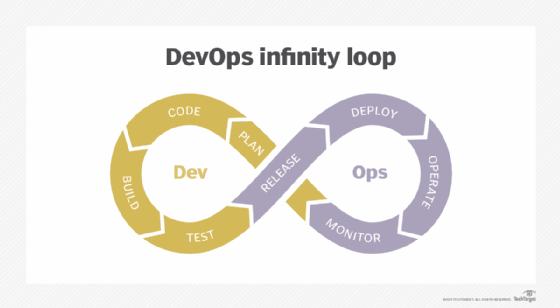

DevOps typically covers each step in a software's lifecycle, from planning and creation through deployment and monitoring.

Kubernetes minimizes infrastructure considerations for DevOps delivery while improving automation, scalability and application resiliency. Use of metrics to guide Kubernetes implementation can play into an overall analytics or AIOps strategy for the application. DevOps teams can set policies for resiliency and scaling through Kubernetes and spend less time managing these factors manually in production.

Kubernetes enables admins to deploy applications anywhere without worry about the underlying infrastructure. This level of abstraction is a major advantage for enterprises that run containers. A container always runs the same within Kubernetes. For example, an organization can deploy applications with the same level of control, whether the containers run on a cloud to be accessed by remote workers, or on on-premises infrastructure.

Kubernetes treats everything as code. Infrastructure and application layers are written as declarative and portable configuration instructions and stored in a repository. Kubernetes manages these configuration resources as versioned artifacts. With Kubernetes, a configuration change, such as a maintenance update, is written into the code and deployed, rather than implemented manually. This setup saves operations specialists time because they no longer have to do these routine tasks.

Kubernetes also enables sophisticated deployment techniques, such as testing in production and release rollbacks. Developers can roll out a code change quickly, a key element of a DevOps strategy.

Inside Kubernetes

Kubernetes runs containers via pods and nodes. A pod operates one or more containers. Pods can run in multiples for scaling and failure resistance. Nodes run the pods. Nodes are typically grouped in a cluster, abstracting the physical hardware resources, whether in an owned data center or public cloud.

Kubernetes can run as a hybrid solution -- on premises or in the cloud. Running Kubernetes in the cloud helps remote DevOps teams because it is easy to collaborate there.

Kubernetes is an open source technology. It was originally created by Google, but is now managed by the Cloud Native Computing Foundation. Because the tool follows open source standards, it can integrate with diverse toolchains and other services in use for a DevOps pipeline. For example, many DevOps organizations rely on a CI/CD toolchain that automatically moves code from one step in the process to the next, including containerization.

Kubernetes does not change containers while they operate. Immutability -- a key characteristic of Kubernetes -- is a benefit because it enables IT admins to stop, redeploy and restart a container on the fly with minimal effect to services except for the one that the container runs.

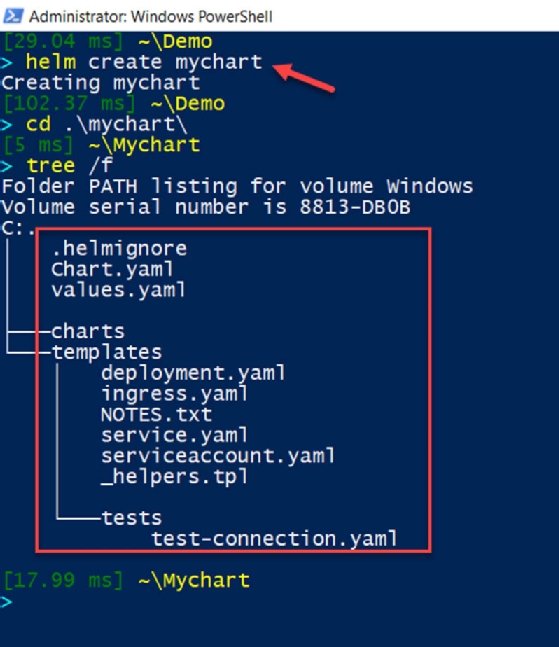

Kubernetes supports Helm charts, which are reusable Kubernetes packages that describe required resources. Operations specialists can use Helm charts to deploy multiple projects with the same customized applications. Without this resource management option, developers would have to customize software for each environment.

The risks of Kubernetes for DevOps teams

Kubernetes is a complex technology, which can make it a risk unto itself. While Kubernetes usage jumped to 48% in 2020 from 27% in 2018, according to the VMware State of Kubernetes Report, there's still a shortage of Kubernetes talent in major employment markets. To integrate DevOps and Kubernetes, don't just build Kubernetes proof-of-concept or pilot projects. Invest in training on the technology.

Organizations should also accommodate the way in which Kubernetes abstracts resources to share among container deployments. Multi-tenancy adds to the complexity of service deployment challenges. Each environment has its own requirements and security controls. If each line of business in a company requires its own Kubernetes cluster and supporting services, such as security, so that one tenant doesn't monopolize the cluster resources and hurt the performance of other tenants, operations will manage multiple Kubernetes setups. Another concern is that each tenant can only access its respective services, including metrics, logs and metadata. These factors mean Kubernetes in real-life, at-scale deployments is challenging.

Suboptimal resource use is also a risk. Poor design and mad rushes to the cloud encourage IT teams to dedicate subsets of CPU, memory, disk space and network resources to each service. These silos spur overprovisioning to support the maximum anticipated workload. Overprovisioning also increases cloud spending or wastes space on owned hardware, which both lead to budget issues.

The DevOps toolchain's future for orchestration

The DevOps toolchain has long been known as a center for automation, whether through CI/CD tools, scripted deployment or a combination of both. However, automation is just part of the distributed orchestration story. The future of DevOps toolchains is most decidedly hybrid-focused, supporting legacy applications still in data centers, existing applications in the cloud and applications newly migrated to the cloud. These applications, in turn, will support increasingly hybrid offices and remote workforces -- including the DevOps teams -- that demand responsive applications regardless of their work location.

For the DevOps toolchain to be a centralized orchestration point, combine:

- Kubernetes;

- automation of the workflow services;

- cloud resources; and

- DevOps practices and automation tools.