CenturionStudio.it - Fotolia

Optimize Docker images for improved efficiency and security

There are several ways -- ranging from Dockerfile adjustments to vulnerability scans -- that IT teams can ensure their Docker images are both efficient and secure.

Any IT admin who works with Docker should understand not only how to build a Docker image, but how to build one that's production ready.

A Docker image is a multilayer template that contains all the necessary instructions and information, such as system libraries and configuration files, to run a software program. Each layer of the Docker image is a specific instruction found within a text document called a Dockerfile.

IT admins can build their own images, using a Dockerfile, or use public images from a registry. Sometimes it's necessary to modify or optimize Docker images -- for example, in some cases, a vendor-supplied Docker image won't meet enterprise IT security requirements.

Beware of image size during this process. When IT admins refactor an existing Docker image, or create a new one, the base image can swell the size of the final image, which means the image takes more time to download and consumes more resources. Also, the more code IT admins place into the image, the higher the likelihood of container security issues.

Rebuild for efficiency

Instead, take a "just-enough-OS" approach to optimize a Docker image. Use the absolute base image to save resources. There are also lightweight Linux distributions, such as Alpine Linux, that IT admins can use to create incredibly small images. If an organization already uses Ubuntu or Red Hat images, change the images to the smallest possible versions of those distributions. Test any changes thoroughly.

As mentioned earlier, images are built in layers. The fewer layers to an image, the more efficient it becomes, in terms of both size and speed. The following commands -- related to an application update, upgrade and install -- in the Dockerfile would be treated as three separate transactions, or layers, which is inefficient:

CMD apt-get update

CMD apt-get upgrade

CMD apt-get install apache2

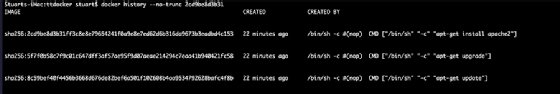

From the image side, this creates three intermediate images when we perform each command individually:

To create them in one layer, combine the commands as follows:

CMD apt-get update && apt-get upgrade -y && apt-get install apache2 -y

It's also possible to split long commands across several lines by including \\ at the end of the line; write the next part of the command on the following line:

CMD apt-get update \\

&& apt-get upgrade -y \\

&& apt-get install apache2 -y

Any time an admin runs a COPY command or a command that changes the file system, it adds a new layer.

Master these Docker commands

Need a quick crash course on essential Docker commands? Here are eight that every Docker user -- including beginners -- should know.

Use labels to display information

Use built-in labels to include important information related to an image, such as maintainer, which identifies a user as the Dockerfile's owner. To do this, add this line to the top section of the Dockerfile:

label maintainer [email protected]

Use key-value pairs for entry input. For example, to input a description, enter:

"description": "My application description"

To expose labels, use the docker inspect command to interrogate the build. To combine labels, specify them together:

"Labels": {

"maintainer": "[email protected]"

"description": "This text illustrates that label-values can span"

}

Manage source files and security

Do not include the source code files in the build with the COPY command. This leads to the potential issue of the Dockerfile running outdated code, especially in a fast-paced development environment.

Instead, mount the source files from the host -- set aside a folder for this purpose. This ensures lightweight builds and keeps source code files up to date. It also means that when admins make small changes -- for example, a tweak to a non-critical file -- they don't need to rebuild all the Docker images, as the code is held on the Linux host, rather than within the image itself. This should be done in a read-only environment, as changes to the folder could have disastrous results as a security vulnerability.

Dockerfiles should run the same way every time. Inspect any Docker image that deviates on first boot.

There are several free and open source tools, such as Docker Bench for Security, that admins can use to check Docker images for known vulnerabilities, including those within an image's libraries and dependencies. While this is a good measure, it doesn't address common exposure issues, such as exploits and bugs in the code.

There are many tools, such as Clair, Layered Insight and SysDig, to scan for vulnerabilities and bugs as well. Some -- while comprehensive -- are extremely complex. They can, however, enable IT admins to scan not just the current image, but the entire registry. They can also support automated scans to detect vulnerable containers.