Testing infrastructure as code: A complete guide

IaC, when implemented correctly, can benefit enterprises' CD pipelines. But, when the code isn't tested before deployment, things can go awry. Follow these strategies for success.

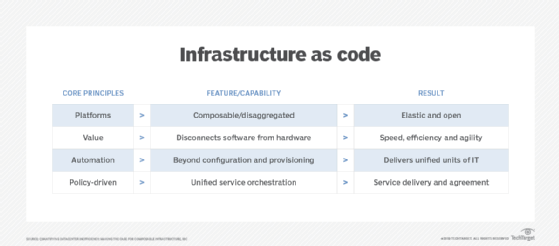

Infrastructure as code (IaC) builds on software-defined technologies to bring more speed and consistency to IT infrastructure provisioning and configuration. Although IaC enables an enterprise to quickly invoke infrastructure to support an application deployment at almost any scale, it relies on code, just as any other software development project -- which means testing is essential.

Use the strategies below to validate a new or updated IaC deployment before it goes live.

What is infrastructure as code?

IaC is a set of practices and tools that enables IT infrastructure to be provisioned, configured and managed through software (code) rather than through traditional manual processes.

Every application requires an underlying IT infrastructure of servers, storage, networking and services. Traditional IT requires human IT administrators to provision and configure resources such as server-based VMs and storage volumes (logical unit numbers) and then connect supporting services such as firewalls or back-end applications such as databases. The goal is to establish an environment where an application can be deployed.

Such manual infrastructure efforts are time-consuming, error-prone and difficult to document. Consider that a modern enterprise application can involve multiple servers, VMs and containers, event-driven functions, cloud services, databases and varied data sources such as data lakes. Mistakes in ad hoc provisioning and configuration can impair application performance or open security vulnerabilities, demanding considerable time to troubleshoot.

This article is part of

What is configuration management? A comprehensive guide

With IaC, software files are used as templates to specify the granular infrastructure details needed to provision resources, configure those resources appropriately for the intended task and manage those resources once established.

The IaC template serves to codify and document the desired specification. When a template is executed, administrators know the exact environment being established and can be assured that the same environment is established every time. Configurations can also be routinely checked against the template to ensure that changes are flagged, reported -- and even prevented.

IaC can be declarative or imperative. Declarative IaC explicitly defines the intended state of the environment with specific resources and configuration details. Imperative IaC is more goal-oriented and relies on a proper sequence of commands to yield an intended outcome. Thus, declarative defines what, while imperative defines how.

IaC is a central element in DevOps and other development workflow automation where developers can automatically provision an environment before deploying a release candidate to production. As with other software, IaC templates and associated configuration files are created by developers and are subject to careful version control.

How does infrastructure-as-code testing work?

The goal of IaC testing is identical to that of testing any other software: Ensure that the code delivers expected results. However, IaC testing takes place on the environment before the application is ever deployed. Once an IaC file version is created, it receives an array of static tests to validate the IaC file before it's ever executed, but testing often employs tools that are familiar to developers, including the following:

- compilers, interpreters and parsers, to read through the file and check spelling and common syntax;

- code linters -- such as YAML Lint -- to validate code and look for common command errors; and

- simulation or dry run tools, to examine and validate the overall deployment plan without actually performing the tasks live.

There are also many other tools -- such as Checkov -- that can scan IaC files for security and compliance configuration.

Once the code is checked and validated, it can move on to unit testing. IaC can involve multiple file components where each file performs certain tasks, so it's important to test each file or unit in isolation. When each module successfully completes its unit testing, the files can undergo integration testing to validate the files in combination and ensure that the code yields an intended result. There are numerous simulators or emulators that can help with unit and integration testing before the code goes live. Common simulation tools include the following:

- Azure Functions Core Tools, to provide development support for creating, developing, testing, running and troubleshooting Azure Functions;

- Azurite emulator, to provide a free local environment for testing Azure Blob, Queue Storage and Table Storage applications;

- Azure Cosmos DB Emulator, to simulate the Cosmos database and support local application testing;

- LocalStack, to mock up AWS cloud and serverless environments; and

- Moto AWS SDK, to mock up AWS services.

The ability to simulate or emulate IaC files without the need to provision actual resources can be especially beneficial for cloud applications -- potentially saving hundreds of dollars in cloud costs from using actual cloud infrastructure for testing.

Once IaC files have received some fundamental static and simulated testing, developers can create the automated tests to actually run the IaC files. At that point, IaC files can receive final testing and validation in production environments. Here, resources and services are actually provisioned and configured according to the IaC files and then checked to ensure that the established environment provides the resources, services, authentication and security required for the intended application.

The most important aspect of any IaC file testing is the proper documentation of results. Test cases generate data that should be recorded for further examination. It's this documentation that delineates pass or fail conditions. When the tests pass, the IaC files can be used for deployment. If the tests fail at any point, developers can readily understand what failed and why, and then focus their remediation efforts on efficient fixes and improvements.

Infrastructure-as-code testing strategies

IaC doesn't typically involve a traditional programming language, such as C++ or Java. Instead, IaC usually relies on more straightforward coding that uses a description language -- often in human-readable JSON or YAML format -- to delineate the desired environment and configuration. When development teams execute that code, an IaC-capable platform parses the description file and uses automation and orchestration to perform the associated provisioning and configuration tasks. Many systems configuration platforms, including Terraform, Chef, Puppet, SaltStack, Ansible, Juju and AWS CloudFormation, possess IaC functionality.

As with any software instructions, IaC files require copious testing before production use. Testing is a vital opportunity to check the file for errors and oversights, and to gauge how an IaC deployment affects the IT environment. For example, if the file implements a new or different configuration, validate the new configuration for proper operation, performance, security and compliance.

Testing also enables IT staff to experiment with special or edge cases and build confidence in the IaC codebase, which often consists of diverse files that perform specific tasks. Approach infrastructure-as-code testing with the same tactics and strategies as any other software project.

Types of testing for infrastructure as code

Types of testing are listed below, though it won't be necessary to perform all of these tests for every IaC file change.

Static or style checks. One of the easiest ways to check an IaC file is simply to read it and verify that the file's contents meet the established criteria for readability, format, variable names and commenting. This kind of static analysis doesn't validate the code's functionality but provides a useful sanity check to confirm it meets fundamental style and quality requirements.

Perform these checks manually or use static code analysis, lint testing or similar tools to automate the code review. The choice of tool usually depends on the language or platform on which the IaC file runs. For example, a tool such as RuboCop checks whether Ruby code meets the criteria outlined in the Ruby style guide, while StyleCop, an open source static code analysis tool, checks C# code for conformance to Microsoft's .NET Framework design guidelines.

Unit tests. Beyond an assessment of format and style, validate the functionality of each IaC file. Unit testing is often the simplest and fastest approach. As with most software, IaC is rarely a single file; it is usually an extensive series of files that serve as units or components. Each unit performs a specific job, and developers use tools within an IaC-type platform -- such as a Chef cookbook or an Ansible playbook -- to string the units together and invoke a complete provisioning and configuration process. When an IaC unit file is created or changed, execute that specific unit file alone in a test environment to validate proper operation.

This focused form of testing enables teams to isolate the cause and effect of any defect for a specific unit. However, a unit testing environment typically doesn't reflect production, so the results don't reveal how the unit file will interact with other IaC files or the overall workflow.

System tests. Once an IaC file passes individual unit testing, put it into the broader process with other IaC files to validate the complete workflow. System testing is often far more involved than unit testing, because developers must perform tests on every workflow that involves the unit file.

To ensure that results match expectations in realistic situations, run system tests on production systems, though don't touch live data or integrate with live production applications. In some cases, IT administrators might provision staging infrastructure on which they can perform infrastructure-as-code testing in relative isolation. System tests also gather basic provisioning and configuration performance metrics to measure any effects of IaC file and workflow changes.

Integration tests. In typical software development and testing scenarios, integration tests are the most involved and comprehensive form of prerelease assessment, and they include the most sophisticated attributes of a deployment, such as backup, high availability and failover. In IaC coding environments, integration tests generally simulate the creation of full workflow deployments. For example, they might provision and configure infrastructure, deploy and configure an application, and connect that application to others within the environment. The goal is to ensure that the complete environment behaves as anticipated.

In effect, integration testing is as close as developers can get to live production. Testing at this level is time-consuming and might last for days, or even weeks.

Blue/green tests. Also known as A/B testing, this type of beta test places the new (green) instance into production, alongside the old (blue) instance. End users can run either instance, or they are grouped and redirected to the appropriate instance. This is a common practice for enterprise applications, as it enables live testing and validation for an updated application, yet maintains the current version for support and, if needed, rollback.

It's unusual for IaC workflows to use blue/green testing. In most cases, if the updated workflow deploys and functions as expected in high-level integration tests -- or even in system testing -- there is little reason to beta test it.

Still, an application that undergoes significant updates or refactoring might require a substantially different operational environment that justifies such A/B testing. For example, a traditional monolithic application might be refactored into a modular microservices application. This would demand a radically different infrastructure and justify A/B testing to help ensure a suitable new infrastructure while still running the existing application.

Infrastructure-as-code testing challenges

Because IaC focuses on infrastructure, IaC file testing can encounter a series of potential challenges related to local and cloud infrastructure. Common IaC testing challenges can include the following:

- Resource sprawl. Deployment consumes resources and services, but those resources and services must be properly released once the testing is completed. Pass or fail, a teardown must free all resources and services involved in the testing. If not, those valuable commodities can remain tied up and unused.

- Loose version control. IaC files aren't second-class software. Those files demand the same level of version and repository control used for the enterprise applications. Loose control can make it difficult to document changes or updates and can allow improper code merges, resulting in poor or improper deployments, which can be hard to detect and require time-consuming troubleshooting.

- Configuration drift. IaC is a software development process, so manual configuration changes made by an engineer at a console should be strongly discouraged -- or even directly prohibited. Otherwise, the configuration might not match the IaC file specs and cause undetected differences between what's specified in IaC files and what's actually deployed -- making problems impossible to troubleshoot. And if code is run again after manual changes are made, it can attempt to revert changes, causing an outage.

- Code reuse. IaC is all about automation, and it's common to reuse code for multiple projects. The problem is that small configuration changes can wind up being used in multiple environments and result in difficult problems. Don't assume that it's OK to simply reuse code. It's important to check and correct configuration issues in IaC testing.

- Meeting dependencies. IaC can involve many dependencies, including cloud APIs. IaC files must be updated and rechecked any time a dependency changes. This is critical to ensure that an infrastructure continues to operate as expected. Establish a mechanism for tracking dependencies and for implementing tests and updates any time a dependency is affected.

IaC testing tools

IaC files can be tested with many of the tools and processes already in place for DevOps and other agile-style development paradigms. But there are plenty of tools that cater specifically to IaC and infrastructure configurations. Popular tools for IaC building, testing and deployment include the following:

- Ansible. Red Hat Ansible Automation Platform is an open source tool that can be used to deploy, configure and manage systems and applications through YAML playbooks.

- AWS CloudFormation. The CloudFormation tool enables AWS users to model, provision and manage AWS resources and services, along with third-party infrastructure, through YAML or JSON templates. CloudFormation can also roll back IaC deployments if problems or issues arise.

- Azure Resource Manager templates. Azure Resource Manager is a tool used to provision and build infrastructure within Microsoft Azure using JSON files.

- CFEngine. CFEngine is an open source tool for automating and managing secure and compliant infrastructure.

- Chef. The Chef Infra tool can automate the provisioning, deployment and management of local, cloud and hybrid cloud infrastructures.

- Google Cloud Deployment Manager. Google's Cloud Deployment Manager is used to provision and manage infrastructure using Google Cloud services.

- Pulumi. Pulumi is an open source tool intended to offer multilanguage and multi-cloud support for IaC in order to provision, deploy and manage infrastructure.

- Puppet. Puppet offers an infrastructure configuration management tool with its own declarative language to model, provision, deploy and configure systems.

- SaltStack. SaltStack -- or, simply, Salt -- organizes IaC code from repositories and uses Python to provision, manage and enforce infrastructure configurations.

- Terraform. Terraform is an open source tool using a dedicated configuration language called Hashicorp Configuration Language (HCL) to describe, provision and manage infrastructure. The HCL and a simple CLI console can validate IaC files.

Monitoring IaC testing and why it's important

Monitoring plays an important role in infrastructure-as-code testing. Although application monitoring emphasizes performance- and business-centric KPIs, IaC monitoring is primarily concerned with metrics, reports, alerts and logs.

When an IaC platform such as Chef, Puppet or Ansible provisions infrastructure and performs a deployment, it also produces logs that track activity and report errors. These logs form the foundation of troubleshooting and auditing, ensuring that the environment works properly, maintains the desired configuration and adheres to established business standards for compliance and security.

Infrastructure-as-code testing might rely on some performance monitoring to ensure that the provisioned and configured infrastructure -- and the workload deployed within that infrastructure -- performs within acceptable levels. From an IaC perspective, performance monitoring is an indirect assessment of the provisioned infrastructure. For example, if an IaC file changes the provisioning or configuration of a network connection, performance testing can measure the effect of those changes. Ongoing monitoring can also alert administrators to problematic trends over time that might demand new attention and changes.