Azure Kubernetes Service (AKS)

What is Azure Kubernetes Service (AKS)?

Azure Kubernetes Service is a managed container orchestration service based on the open source Kubernetes system, which is available on the Microsoft Azure public cloud. An organization can use AKS to handle critical functionality such as deploying, scaling and managing Docker containers and container-based applications.

AKS became generally available in June 2018 and is most frequently used by software developers and IT operations staff.

Kubernetes is the de-facto open source platform for container orchestration but typically requires a lot of overhead in cluster management. AKS helps manage much of the overhead involved, reducing the complexity of deployment and management tasks. AKS is designed for organizations that want to build scalable applications with Docker and Kubernetes while using the Azure architecture.

An AKS cluster can be created using the Azure command-line interface (CLI), an Azure portal or Azure PowerShell. Users can also create template-driven deployment options with Azure Resource Manager templates.

AKS features and benefits

The primary benefits of AKS are flexibility, automation and reduced management overhead for administrators and developers. For example, AKS automatically configures all of the Kubernetes nodes that control and manage the worker nodes during the deployment process and handles a range of other tasks, including Azure Active Directory (AD) integration, connections to monitoring services and configuration of advanced networking features such as HTTP application routing. Users can monitor a cluster directly or view all clusters with Azure Monitor.

Because AKS is a managed service, Microsoft handles all Kubernetes upgrades for the service as new versions become available. Users can decide whether and when to upgrade the Kubernetes version in their own AKS cluster to reduce the possibility of accidental workload disruption.

In addition, AKS nodes can scale up or down to accommodate fluctuations in resource demands. For additional processing power, AKS also supports node pools enabled by graphics processing units (GPUs). This can be vital for compute-intensive workloads, such as scientific applications.

Users can access AKS via an AKS management portal, an AKS CLI, or by using templates through tools such as Azure Resource Manager. The service also integrates with the Azure Container Registry (ACR) for Docker image storage and supports the use of persistent data with Azure Disks. The Azure portal also enables users to access Kubernetes resources in AKS.

AKS integrates with Azure AD to provide role-based access control (RBAC) for security and monitoring of Kubernetes architecture.

AKS also enables users to create and modify custom tags for AKS resources created by end users.

AKS use cases

AKS usage is typically limited to container-based application deployment and management, but there are numerous use cases for the service within that scope.

For example, an organization could use AKS to automate and streamline the migration of applications into containers. First, it could move the application into a container, register the container with ACR and then use AKS to launch the container into a preconfigured environment. Similarly, AKS can deploy, scale and manage diverse groups of containers, which helps with the launch and operation of microservices-based applications.

AKS usage can complement agile software development paradigms, such as continuous integration/continuous delivery and DevOps. For example, a developer could place a new container build into a repository, such as GitHub, move those builds into ACR, and then rely on AKS to launch the workload into operational containers.

Data streaming can be made easier with AKS as well. The tool can be used to process real-time data streams in order to perform a quick analysis.

Other uses for AKS involve the internet of things (IoT). The service, for instance, could help ensure adequate compute resources to process data from thousands, or even millions, of discrete IoT devices. Similarly, AKS can help ensure adequate compute for big data tasks, such as model training in machine learning environments.

The architecture of AKS

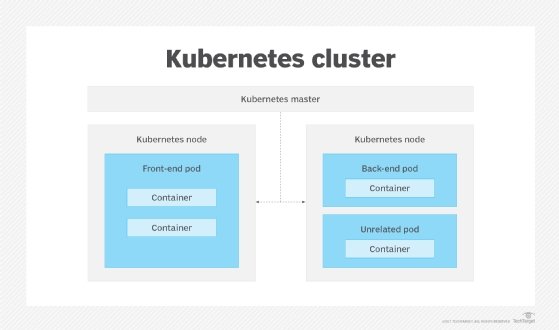

When a user creates an AKS cluster, a control plane is automatically created and configured. The control plane is a managed Azure resource the user cannot access directly. The control plane contains resources that:

- provide interaction for management tools;

- maintain the state and configuration of the Kubernetes cluster;

- schedule and specify which nodes run the workload; and

- oversee smaller actions, such as replicating Kubernetes pods or handling node operations.

The user defines the size and number of nodes, and the Azure platform configures a secure communication between the nodes and the control plane.

An AKS cluster has at least one node. Central processing unit and memory are the node resources used to help the node function as part of a cluster. Nodes with similar configurations are grouped together into node pools.

AKS deployments also cover two resource groups. One group is only the Kubernetes service resource, while the other is the node resource group. The node resource group contains all of the infrastructure resources associated with the cluster. A service principal or managed identity is needed to create and manage other Azure resources.

AKS security, monitoring and compliance

AKS supports RBAC through Azure Active Directory, which enables an administrator to tailor Kubernetes access to AD identity and group associations. Admins can monitor container health using processor and memory metrics collected from containers, Kubernetes nodes and other points in the infrastructure. Container logs are also collected and stored for more detailed analytics and troubleshooting. Monitoring data is available through the AKS management portal, AKS CLI and application programming interfaces (APIs).

AKS meets the regulatory requirements of System and Organization Controls and is compliant with major regulatory bodies, including the International Organization for Standardization, Health Insurance Portability and Accountability Act and Health Information Trust Alliance. AKS is also certified as Kubernetes conformant by the Cloud Native Computing Foundation, which oversees open source Kubernetes.

If an AKS cluster is created or scaled up, the nodes are automatically deployed with the most recent security updates. Azure will also automatically apply operating system (OS) security patches to Linux-based nodes; however, Windows Server nodes do not automatically apply the most recent updates.

AKS availability and costs

AKS is a free Azure service, so there is no charge for Kubernetes cluster management. AKS users are, however, billed for the underlying compute, storage, networking and other cloud resources consumed by the containers that comprise the application running within the Kubernetes cluster.

AKS is currently available in numerous regions, including Eastern, Central and Western U.S.; Central Canada; Northern and Western Europe; and Southeast Asia. Other regions will be added over time.

Kubernetes vs. Docker

Docker technology is used to create and run containers. Docker is made up of the client CLI tool and the container runtime. The CLI tool executes instructions to the Docker runtime, while the Docker runtimes create containers and run them on the OS. Docker also contains container images.

Comparatively, Kubernetes is a container orchestration technology. Kubernetes groups the containers that support a single application or microservice into a pod. Docker used to be the default container runtime used by Kubernetes; although, other container runtime choices are also available now.

The important distinction between Docker and Kubernetes is that Docker is a technology for defining and running containers, while Kubernetes is a container orchestration framework that represents and manages containers. Kubernetes does not make containers.

What are the differences between AKS and service fabric?

Azure Service Fabric is a platform-as-a-service offering designed to facilitate the development, deployment and management of applications for the Azure cloud platform.

The term fabric is used as a synonym for framework. Applications created in the Service Fabric environment are composed of separate microservices that communicate with each other through service APIs.

Service Fabric is a good fit for applications built using Windows Server containers or ASP.NET Internet Information Services applications. Service Fabric provides a path toward scalable, cloud-based setups within the context of more traditional programming methods. It is often used to lift and shift existing Windows-based applications to Azure without having to rearchitect them completely.

The biggest difference between AKS and Service Fabric is that AKS only works with Docker-first applications using Kubernetes. Service Fabric is geared toward microservices and supports a number of different run-time strategies. For example, Service Fabric can deploy both Docker and Windows Server containers.

Both Service Fabric and AKS offer integrations with other Azure services, but Service Fabric integrates with other Microsoft products and services more deeply because it was wholly developed by Microsoft.

Unless the application under development relies heavily on the Microsoft technology stack, a cloud-agnostic orchestration service like Kubernetes will be more appropriate for most containerized apps.

AKS vs. ACS

Prior to the release of AKS, Microsoft offered Azure Container Service (ACS), which supported numerous open source container orchestration platforms, including Docker Swarm and Mesosphere Data Center Operating System, as well as Kubernetes. With AKS, the focus is exclusively on the use of Kubernetes. ACS users with a focus on Kubernetes can potentially migrate from ACS to AKS.

However, AKS poses numerous differences a user must address before migrating from ACS. For example, AKS uses managed disks, so a user must convert unmanaged disks to managed disks before assigning them to AKS nodes. Similarly, a user must convert any persistent storage volumes or customized storage class objects associated with Azure disks to managed disks.

In addition, stateful applications can be affected by downtime and data loss during a migration from ACS to AKS, so developers and application owners should perform due diligence before making a move.

Here we answer five key questions about Kubernetes backup, including what the key Kubernetes backup tools are and how to incorporate Kubernetes backup into existing disaster recovery processes.