container image

What is a container image?

A container image is an unchangeable, static file that includes executable code so it can run an isolated process on IT infrastructure. The image is comprised of system libraries, system tools and other platforms settings a software program requires to run on a containerization platform, such as Docker or CoreOS Rkt. The image shares the OS kernel of its host machine.

A container image is compiled from file system layers built onto a parent or base image. These layers encourage reuse of various components, so the user does not create everything from scratch for every project. Technically, a base image is used for a wholly new image, while a parent indicates modification of an existing image. However, in practice, the terms are used interchangeably.

Container image types

A user creates a container image from scratch with the build command of a container platform, such as Docker. The container image maker can update it over time to introduce more functionality, fix bugs or otherwise change the product, and can modify the image to use it as the basis for a new container.

For increased automation, the set of layers are described by the user in a Dockerfile, and are assembled into the image. Each command in the Dockerfile creates a new layer in the image. Continuous integration tools, such as Jenkins, can also automate a container image build.

Many software vendors create publicly available images of their products. For example, Microsoft offers a SQL Server 2017 container image that runs on Docker. Container adopters should be aware of the existence of corrupt, fake and malicious publicly available container images, sometimes disguised to resemble official vendors' images.

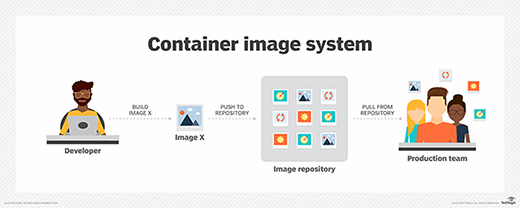

Container images are stored in a registry that is either private or public on a repository, such as Docker Hub. The image creator pushes it to the registry, and a user pulls the image when they want to run it as a container. Features such as Docker Content Trust rely on digital signatures to help verify that images files downloaded from public repositories are original and unaltered. However, this added authenticity verification does not prevent the creation or distribution of malware.

Some images are purposefully minimal, while others have large file sizes. Generally, they are in the range of tens of megabytes.

Container image benefits and attributes

The container image format is designed to download quickly and start instantly. A running container generally consumes less compute and memory than a comparable virtual machine.

Images are identified through the first 12 characters of a true identifier and have a virtual size measured in terms of distinct underlying layers. Images can be tagged or left untagged and are searchable through a true identifier only.

To be widely interoperable, container images rely on open standards and operate across different infrastructure including virtual and physical machines and cloud-hosted instances. In container deployments, applications are isolated from one another and abstracted from the underlying infrastructure.

Container image drawbacks

Enterprise IT organizations must monitor for fraudulent images, and train users about best practices when they pull from public repositories. To avoid the issue, an organization can create a limited list of available images.

In addition to authenticity, container image sprawl is a risk. Organizations can end up with a variety of container images that accomplish the same thing, or unused images that were never deleted. In addition, stopped containers are not automatically removed, and continue to consume storage resources. Commands such as docker rmi and docker rm remove unused images and containers.